Voice Systems

Voice SystemsScalability for Luxury AI Live Voice Art: Orchestrating Sentient Environments

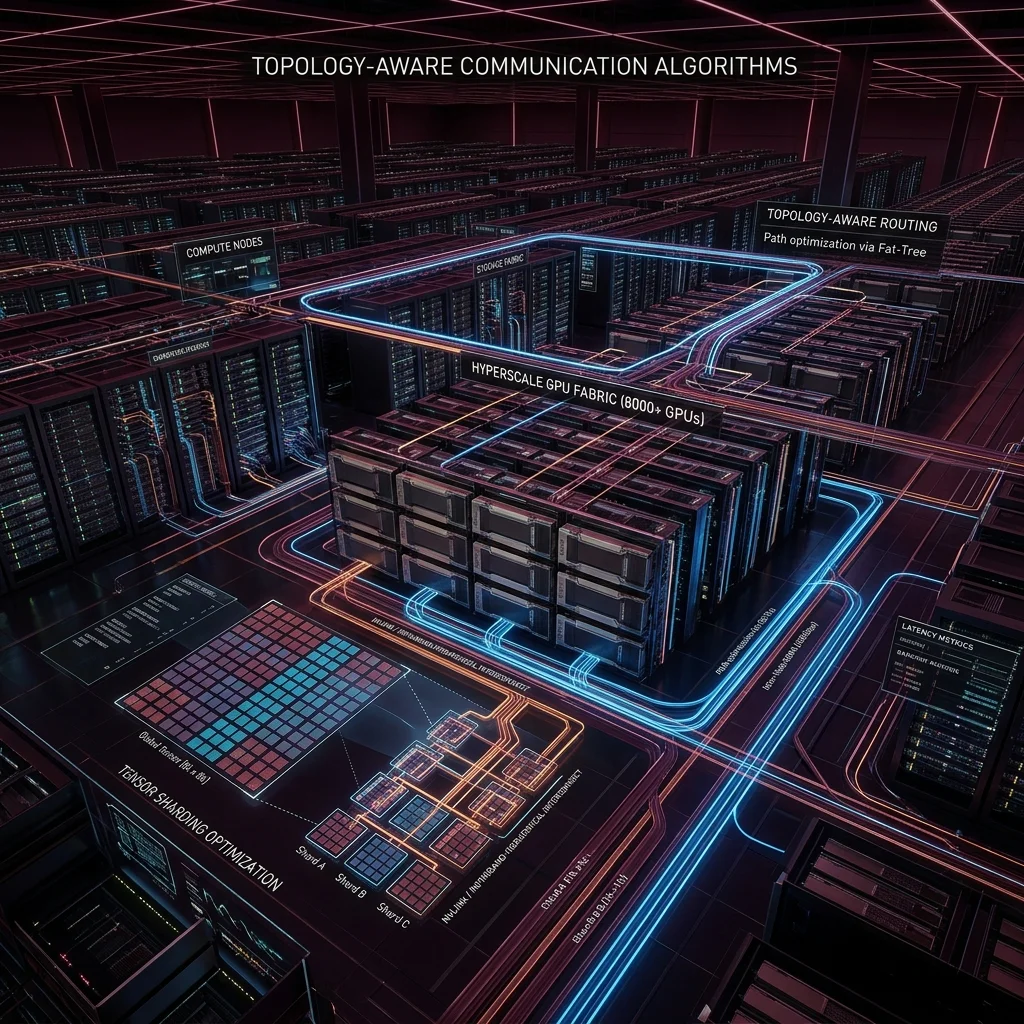

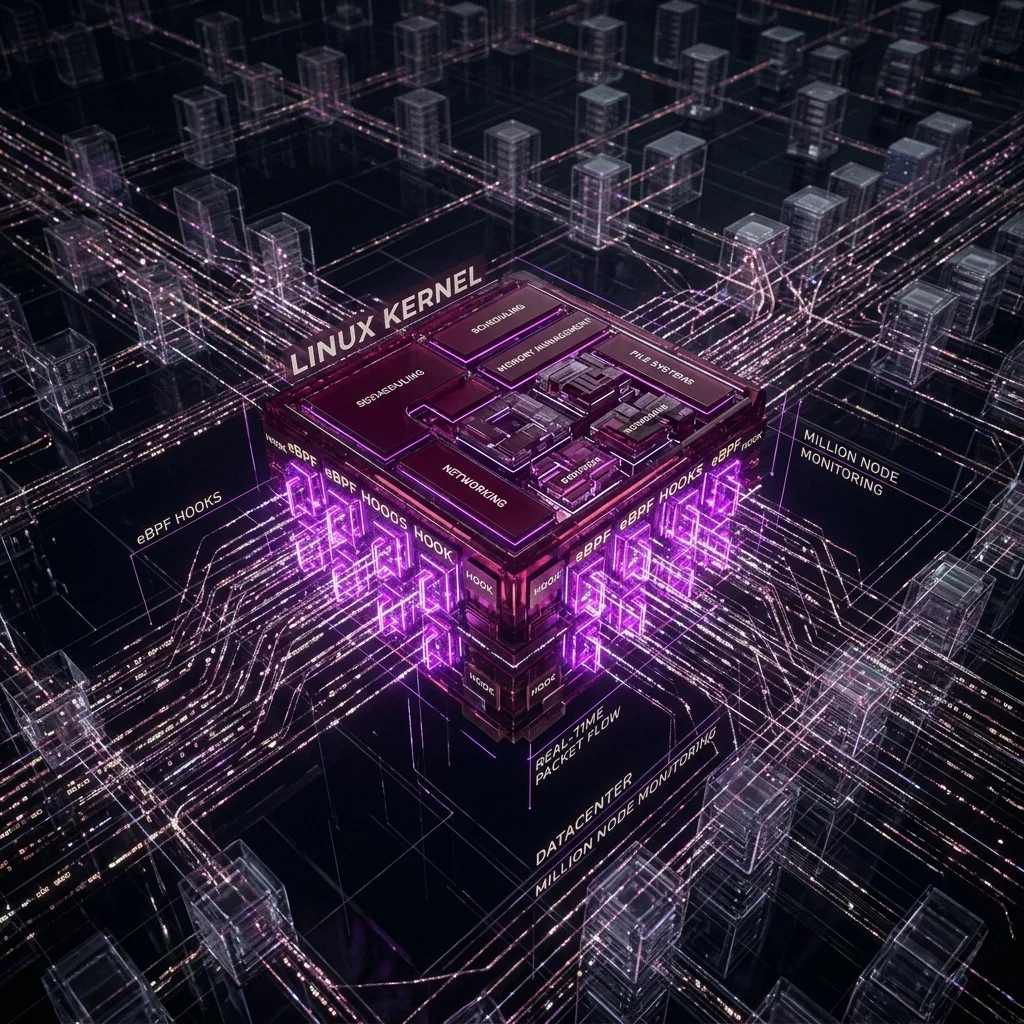

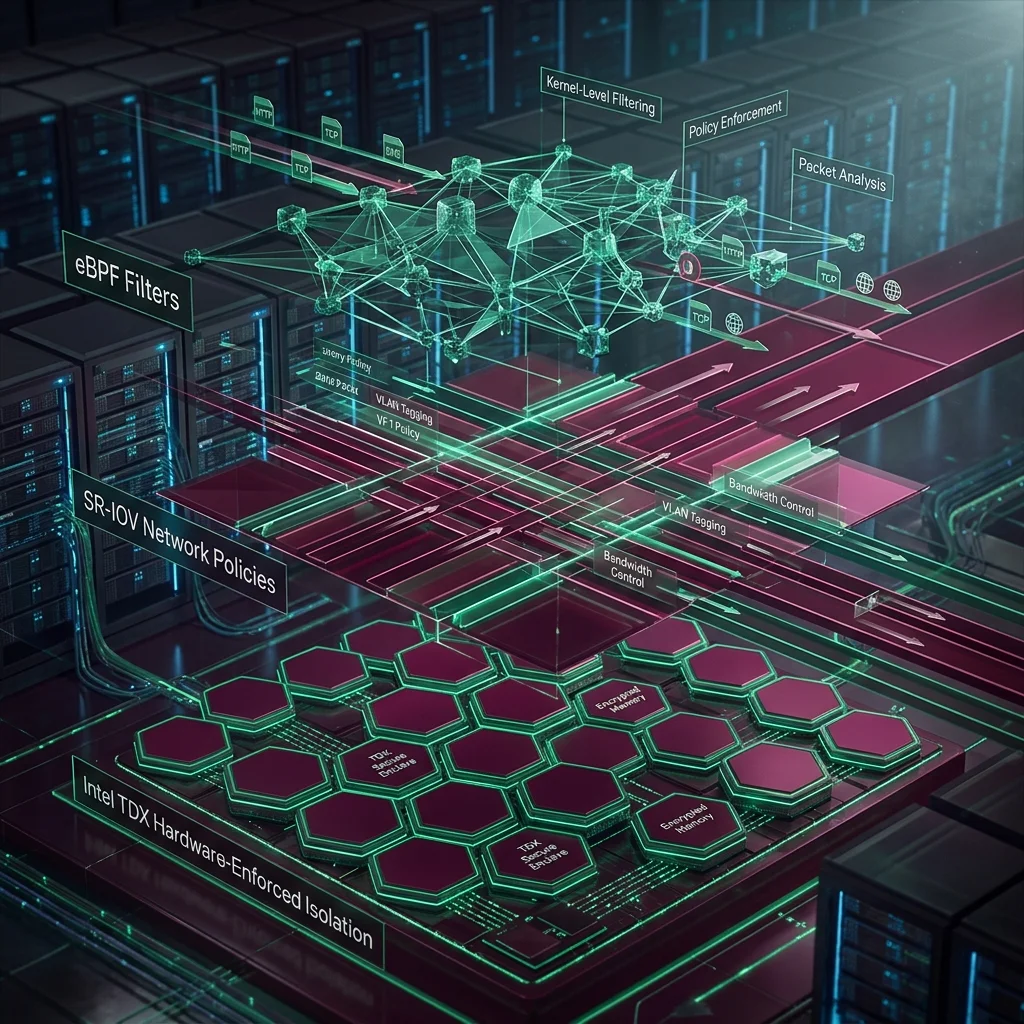

How we scale the infrastructure for high-end agentic voice art—supporting low-latency WebRTC streams, multi-GPU orchestration, and real-time vision sensors for immersive luxury spaces.